Many a time we are faced with the challenge of trying to understand large code repositories for various reasons. There is a plethora of tools out there, but sometimes you just get stuck with a problem for which there simply isn't any tool. One such problem hit me today, I wanted to find the call graph of a function, say A, and check where a function, say B lies in it, and what's the call path if I want to go from function A to B.

This is where code analysis libraries enter. They allow us to parse source code in a programmatic way, thus for my case - no more manually exploring the call path.

One such library is libclang (from the awesome CLANG/LLVM project). libclang is for c, but it has python bindings as well, which we are going to explore.

Credits - The following awesome blog helped me in getting started with libclang, and some code, and examples may be from it.

http://eli.thegreenplace.net/2011/07/03/parsing-c-in-python-with-clang

Before you proceed, make sure of the following.

Let's start writing the python script which will parse this code.

Step 1 Import clang

Step 2 Load the C++ source code

Step 3 Get an iterator over the syntax tree of the source code, and print some tokens

See the following screenshot.

Few things to note

This is my first attempt at parsing source code via a python script, and I believe it will help me greatly in debugging and understanding source codes, as I learn more. Hope it helps you as well.

References-

This is where code analysis libraries enter. They allow us to parse source code in a programmatic way, thus for my case - no more manually exploring the call path.

One such library is libclang (from the awesome CLANG/LLVM project). libclang is for c, but it has python bindings as well, which we are going to explore.

Credits - The following awesome blog helped me in getting started with libclang, and some code, and examples may be from it.

http://eli.thegreenplace.net/2011/07/03/parsing-c-in-python-with-clang

Before you proceed, make sure of the following.

- LLVM(Clang) is installed, and is present in computer's path

- minGW is installed, and is present in computer's path

- You are using 32bit (x86) windows OS (I was unable to use it on x64 windows, I kept getting access violation errors, which is perhaps due to a known bug). I have not tested this on Linux, but it should work on x32 Linux equally well.

- Install python clang module from "https://github.com/llvm-mirror/clang/tree/master/bindings/python"

Here are the steps -

a. Download clang folder from the link

b. Put it in python's lib folder. On my windows 7 x86, I had to paste it in "C:\Python27\Lib".

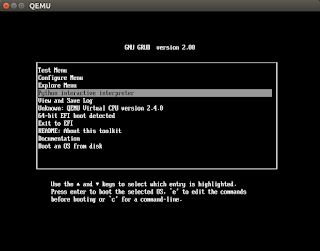

If you are not sure where the lib folder is on your system, open a python console, import a module for which you are sure that it exists in your system, say ctypes, and print the value of ctypes.__file__. This will give you an idea about the location of the folder. See the following screenshot.

Let's suppose we have the following C++ code that we want to parse.

//demo_code.cpp class Person { }; class Room { public: void add_person(Person person) { // do stuff } private: Person* people_in_room; }; template <class T, int N> class Bag<T, N> { }; int main() { Person* p = new Person(); Bag<Person, 42> bagofpersons; return 0; }

Let's start writing the python script which will parse this code.

Step 1 Import clang

import clang.cindex

Step 2 Load the C++ source code

index = clang.cindex.Index.create() translation_unit = index.parse("demo_code.cpp")

Step 3 Get an iterator over the syntax tree of the source code, and print some tokens

print 'Translation unit:', translation_unit.spelling for child in translation_unit.cursor.get_children(): print child.spelling for node in translation_unit.cursor.walk_preorder(): print "pre-order-traversal",node.spelling,node.get_usr()

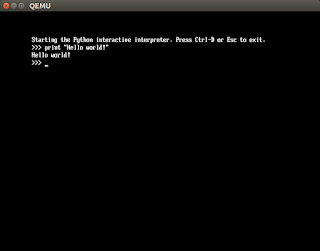

See the following screenshot.

|

| python libclang parsing over our source code demo_code.cpp |

- spelling member in python code tells the name of the token we are referring to.

- get_usr() gives us something called as Unified Symbol Resolution, this is like a global name for the token we are referring to. This helps is identifying a token uniquely when we have multiple source files.

Here is the complete python code.

import sys import clang.cindex index = clang.cindex.Index.create() translation_unit = index.parse("demo_code.cpp") print 'Translation unit:', translation_unit.spelling for child in translation_unit.cursor.get_children(): print child.spelling for node in translation_unit.cursor.walk_preorder(): print "preorder",node.spelling,node.get_usr()

References-

- Installing clang on windows - http://blog.johannesmp.com/2015/09/01/installing-clang-on-windows-pt2

- Parsing C++ in Python with Clang - http://eli.thegreenplace.net/2011/07/03/parsing-c-in-python-with-clang

- libclang slides - http://llvm.org/devmtg/2010-11/Gregor-libclang.pdf